Presumably, everyone values their privacy. The way the internet and the online is evolving, suggests otherwise. Never before in history has so much information about individuals been available so easily on a platform. People willingly share information online with their friends, acquaintances, and very often, even strangers. So does that mean, people have suddenly stopped caring about their privacy?

John Palfrey and Urs Gasser, in their book Born Digital: Understanding the First Generation of Digital Natives, explain this paradox. They argue that “many young people believe their conversations online are far more private than they are.” Moreover, very few of this first generation of “digital natives” are looking into the future to understand what the implications of such widespread sharing might be.

Sure enough, there are password-protected sites, private databases or those not open for crawling by search engines, but both kinds of sites – the ones intended for the public audience and those meant to be restricted to a private group – leave behind a digital imprint. ChoicePoint and companies like them act as private aggregators of individual-level data, combining data from public sources as well as private databases. These companies generate revenue largely by selling this information to those who would want them (ChoicePoint was generating $1bn in revenues in 2006). But there is no guarantee that these companies will not suffer from breaches of this data (as happened with Choicepoint and many others such as AOL and T.J. Maxx), which in turn has serious implications for those whose digital dossiers are severely compromised.

What is this data used for? Well, it is primarily used for targeted advertising. While there is always the threat of the “bad guys” getting access to this data, even firms who claim to “Do No Evil” are collecting data that could very well be mis-used at some point by these same “do-no-evil” preaching firms.

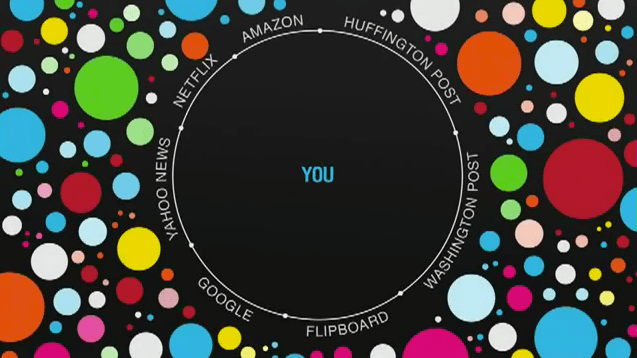

Worth noting is the fact that neither is this data being used only for targeted advertising nor is the lack of privacy the only negative implication. Increasingly, firms like Google and Facebook are using this information and passing them through algorithmic filters to decide what they want us to see. This “Filter Bubble” – which Jonathan Stray in his article “Are we stuck in Filter Bubbles?” essentially describes as “the worry that our personalized interfaces to the Internet will end up telling us only what we want to hear, hiding everything unpleasant but important” – is equally scary for some. It also has some serious implications for how our society’s views are shaped, and how much people are exposed to the “other”.

With both these phenomenons- the seeming loss of control over our privacy and the danger of the filter bubble – things are not so black and white, as some of the folks who have written extensively about this may suggest. Targeted advertising helps users find what they like more easily. Google’s personalized results or Facebook’s customized newsfeed means that I can navigate the plethora of information available on the web today much more easily. Moreover, these social networks, as Christakis and Fowler explain in their book, The Surprising Power of Our Social Networks and How They Shape Our Lives, enhance our personal and professional ties, which in turn enrich our lives and our productive activity.

As a busy student and professional, I value my time. I get frustrated when I have to spend too much time looking for something online. I like the fact that Facebook helps me easily stay in touch with people who I otherwise would totally lose touch with. I like that LinkedIn lets me build up my professional network and retain connections to those who I meet offline. Would I prefer that these networks and sites do not know anything about me? Would I prefer to go back to an unpersonalized online world? Would I want to think twice before I search for something on Google? I don’t think so.

But neither would I want certain pieces of information about me be accessible easily on the internet. I do not want to deal with the implications of identity theft, or credit card theft. The trade-offs then that we are faced with are clearly as difficult to resolve as they are real.

New technology in society always comes with such trade-offs. Whether its gun powder, TV or nuclear fission, new innovations, products and technologies bring huge benefits along with opportunities for abuse or mis-use of the technology. So in a way, these issues and the trade-offs that we are discussing are not new (well, the filter bubble, as Stray argues, existed in the 1960s too!). And so the resolution also to me is not so hard to find (at least at the high level). As with other inventions and technologies, we will have to learn how to deal with it, manage it and to some extent put in the right mechanisms to prevent its abuse.

And while I generally agree with the recommendations put forth by Stray, the devil will ultimately lie in the details (and the execution of this plan). Yes, let’s build better personalization algorithms, let’s curate journalism more, let’s map out the whole web, and yes, let’s start figuring out what we really want. All these things are easier said than done.

We have faced this struggle in my startup, Findable.in, so I have seen this from both a user and a company perspective. As people search for products on our site, we have to decide which ones to show up top. Do we show the products that are most popular, the cheapest or the ones from brands that pay us the most? What criteria do we prioritize? For now, we’ve decided not to decide for the user. We’ve given the user the option to decide which criteria matters most to him or her. They can sort based on different criteria.

That suggests a broader point of resolution of these issues. Let companies not decide for users – let users decide for themselves what they want. Sure, this might lead to UI issues – but lets give them these options. I believe that users might not know what’s best for them today, but they will play with the different sort options on Findable and eventually figure it out. And yes, those actions and the data that we collect will help us understand our own users better. By learning what matters more to them, hopefully we can slowly but surely start to serve them better. Will we face the same issues as a Google or Facebook does? Sure, we will. Can we mis-use the information that we can track? Sure, we can. While for us aggregate level data is much more meaningful than individual level data, will we be prone to security breaches? Sure, we will.

These issues are very real, and very close to everyone’s lives. But just like we are still grappling with the negative and positive implications of nuclear technology, I believe that this debate will continue to become more and more complex with time. Hopefully, our ability to deal with it and manage it will also increase over time. Again, easier said than done!

photo credit: brainpickings.org

Leave a comment